On 19 Feb 2026, the updated AI Agent Index (maintained by researchers from MIT, Cornell University and more) was published with new data tracking the evolution of agentic AI systems. While reading up on my previous post Agents of Chaos: The Unquantifiable Risk at the Heart of the AI Revolution, I came across the paper and associated site.

If you have not looked at it yet, it is worth your time. It is one of the clearest snapshots we have of the current state of autonomous AI and where it is going.

Here are some useful links:

- AI Agent Index (Website)

- The 2025 AI Agent Index: Documenting Technical and Safety Features of Deployed Agentic AI Systems (Paper)

The summary is simple.

- Autonomy is accelerating.

- Governance is not keeping pace.

- And that gap is going to matter.

The Acceleration of Autonomy

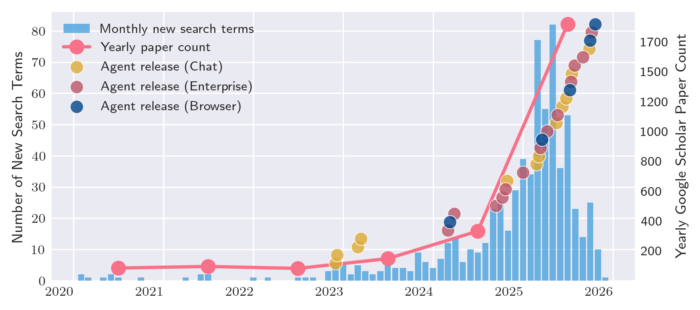

In the last two years alone, 24 of the 30 tracked agents were either released or received major updates. That is not incremental innovation, that is acceleration (a gold rush).

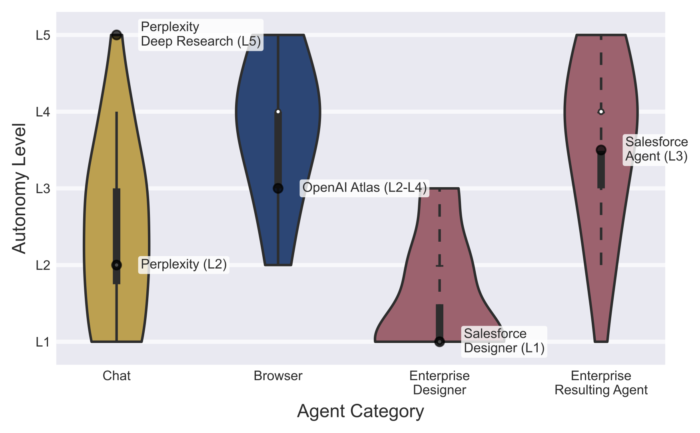

- Browser agents are now operating at L4–L5 autonomy. That means they do not just assist. They execute. They plan. They take multi-step actions with limited intervention once started.

- Enterprise agents follow a similar path. They may be designed conservatively, but once deployed into workflows, they begin to operate at far higher levels of independence.

We are no longer dealing with tools that help you think (like simple chat bots), we are deploying systems that act on your behalf. This changes everything.

With the acceleration and the increase and autonomy – they key question become how we maintain security, governance and visibility (not to mention control).

The Safety (Governance) Disclosure Gap

Of the 13 agents classified at frontier autonomy levels, only four disclose any meaningful agentic safety evaluations:

- ChatGPT Agent

- OpenAI Codex

- Claude Code

- Gemini 2.5 Computer Use

The broader numbers are more revealing.

- 25 of 30 agents disclose no internal safety results.

- 23 of 30 provide no third-party testing information.

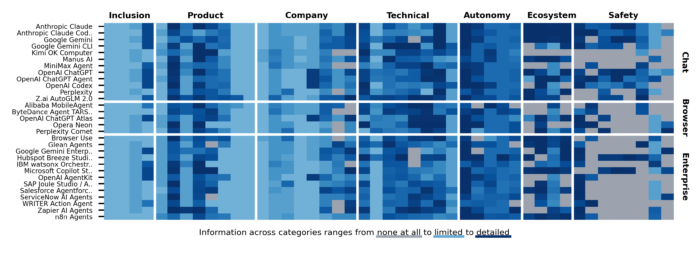

Developers are very transparent about what their agents can do, and they are far less transparent about how those agents were tested.

For enterprises operating under ISO 27001, ISO 42001, SOC 2, NIST, or sector regulation, this is not academic. It is a procurement risk. It is a board-level question waiting to be asked.

Just have a look at the “Safety” section of the disclosure map.

The Fragmented Responsibility Chain and Concentrated Model Providers

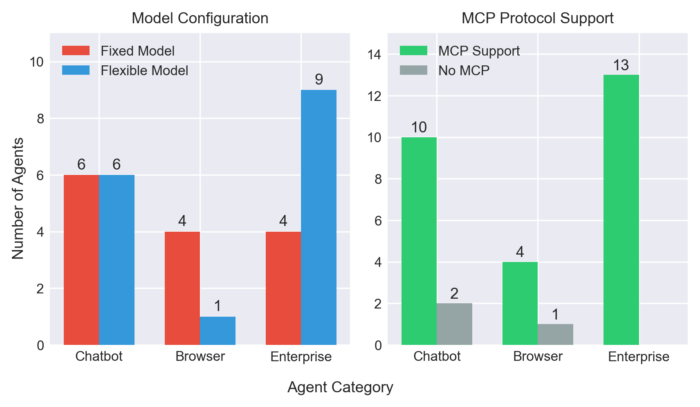

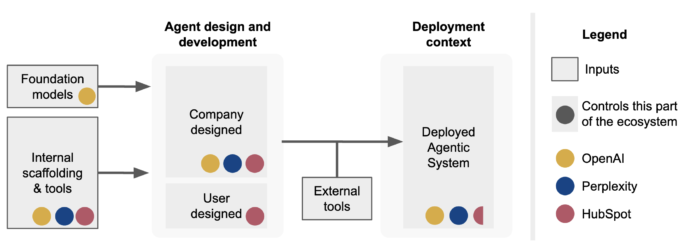

Most agents are not vertically integrated systems, they sit on top of foundation models provided primarily be three main players, OpenAI, Anthropic and Google.

The agent builder adds orchestration and scaffolding. The enterprise adds configuration and deployment context. When something goes wrong, responsibility is distributed across layers. Who is to blame, the model, the agent framework, the tools integrated or some configuration operator?

This reminds me very much of the early days cloud adoption when abstraction created acceleration but could not solve bad architecture, design or configuration. In the case of cloud, shared responsibility models took years to mature in clarity. Contracts evolved. Audit rights became standard. Logging expectations stabilised. Data processing and storage became clear.

We are now at the early stage of that same cycle — but with systems that can act autonomously. No single entity clearly owns end-to-end accountability, and the is different from cloud today.

That is a yet again a governance problem, not a technical one.

The Web Identity Problem

Browser-based agents introduce another layer of complexity. They simply act “on behalf of a user.” In practice, that often means bypassing traditional bot controls and ignoring conventional web signaling mechanisms like robots.txt. That also creates a huge question of identity and accountability for actions (but that is a whole other discussion). It is therefor shocking that only one agent in the index uses cryptographic request signing – ChatGPT Agent. That is crazy to consider.

We do not yet have a mature identity model for autonomous agents operating across the web. If an agent logs into a system, accesses content, or triggers transactions, what is its identity? How is that identity verified? How is it attested? How is it audited?

In identity governance terms, we are allowing non-human actors to execute high-impact workflows without fully established control frameworks. We would never allow that for a human privileged account.

Yet we are beginning to allow it for AI.

The Governance Opportunity

This is not only a risk story. It is an opportunity story. As autonomy increases, the differentiator will not be raw power. It will be governability.

Enterprises, our businesses and our customers will need to start asking harder questions:

- What autonomy level does this agent operate at?

- What red-teaming and safety testing was performed?

- Is there third-party validation?

- Are outbound actions signed and traceable?

- Is there an auditable trail of decisions?

- Is there a clear kill-switch?

Vendors that can answer those questions confidently will win in regulated markets. Traditional IGA and PAM processes and platforms will need to evolve to keep up with demand for accountability (while allowing for speed of execution).

We are entering a new domain, not mere human and non-human, but a merging of:

- Agent identity.

- Agent permissions.

- Agent attestation.

- Agent auditability.

Those who work in governance, security, identity, and compliance should not see this as a threat. It is the next frontier of structured control and visibility.